Hey i have managed to get it working.

opened 08:06AM - 21 May 25 UTC

closed 06:48AM - 22 May 25 UTC

bug

### Description

Hi,

this might be the wrong place to ask but the deeplearning… .ai page doesnt have a Q&A or anywhere to ask.

I am currently doing the second tutorial for "Practical Multi AI Agents and Advanced Use Cases" with crewai.

In the tutorial we are using Trello, but since I don't use Trello I wanted to try out making a custom Tool for Asana instead.

The tool itself seems to run fine. I give my workspace and project as inputs and the llm correctly calls the tool with the inputs and gets a response.

However, the llm seems to not be able to handle the response. I let the Tool Format the list of tasks that i am getting from the project into a string to make it easier to understand for the model, but I am getting an IndexError as follows:

# Agent: Data Collection Specialist

## Task: Create an initial understanding of the project, its main tasks and the team working on it. Use the Asana Data Fetcher tool to gather data from the Asana project.

🤖 Agent: Data Collection Specialist

Status: In Progress

└── 🧠 Thinking...

🤖 Agent: Data Collection Specialist

Status: In Progress

🤖 Agent: Data Collection Specialist

Status: In Progress

DEBUG: BoardDataFetcherTool _run kwargs: {'workspace_name': 'myworkspace', 'project_name': 'myproject'}

# Agent: Data Collection Specialist

## Thought: Action: Asana Board Data Fetcher

## Using tool: Asana Board Data Fetcher

## Tool Input:

"{\"workspace_name\": \"myworkspace\", \"project_name\": \"myproject\"}"

## Tool Output:

### Asana Tasks:

- **Check out freqtrade options** (GID: 1207913426279748)

- **Check if mongodb might be an option for storing trades** (GID: 1207913426279737)

- **Reset alarms ** (GID: 1207913426279733)

- **Make helper ** (GID: 1207913426279729)

- **Remove DBHelper from usage, but keep for later** (GID: 1208664419290176)

- **refactor main ** (GID: 1208664419290180)

- **Improve calculation** (GID: 1208664419290184)

- **Improve saving of inf** (GID: 1208664419290186)

- **Setup proper retrieval and analysis with db** (GID: 1207913426279746)

🤖 Agent: Data Collection Specialist

Status: In Progress

└── 🧠 Thinking...

Provider List: https://docs.litellm.ai/docs/providers

🚀 Crew: crew

└── 📋 Task: f648f16c-cd0a-4e90-b64a-59acbf76cac4

Status: Executing Task...

└── 🤖 Agent: Data Collection Specialist

Status: In Progress

└── ❌ LLM Failed

╭────────────────────────────────────────────────────────────────────────────────── LLM Error ──────────────────────────────────────────────────────────────────────────────────╮│ ││ ❌ LLM Call Failed │

│ Error: litellm.APIConnectionError: list index out of range ││ Traceback (most recent call last): ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\main.py", line 2904, in completion ││ response = base_llm_http_handler.completion( ││ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\llms\custom_httpx\llm_http_handler.py", line 270, in completion ││ data = provider_config.transform_request( ││ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\llms\ollama\completion\transformation.py", line 322, in transform_request ││ modified_prompt = ollama_pt(model=model, messages=messages) ││ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\litellm_core_utils\prompt_templates\factory.py", line 229, in ollama_pt ││ tool_calls = messages[msg_i].get("tool_calls") ││ ~~~~~~~~^^^^^^^ ││ IndexError: list index out of range ││ ││ │╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯

I am using Ollama for the agent and "llama3.1:8b" as model (I've used it in previous tutorials where it worked fine and was able to use tools as well)

### Steps to Reproduce

This the custom Tool that I setup

class AsanaInput(BaseModel):

"""Input for fetching data from an Asana board."""

workspace_name: str = Field(..., description="Name of the Asana workspace")

project_name: str = Field(..., description="Name of the project within the workspace")

class BoardDataFetcherTool(BaseTool):

name: str = "Asana Board Data Fetcher"

description: str = "Fetches tasks from an Asana project using workspace name and project name"

args_schema: Type[BaseModel] = AsanaInput # Add this line

access_token: str = os.environ['ASANA_ACCESS_TOKEN']

def _get_workspace_gid(self, workspace_name: str) -> str:

"""Get workspace GID from workspace name"""

url = "https://app.asana.com/api/1.0/workspaces"

headers = {

'Authorization': f'Bearer {self.access_token}',

}

response = requests.get(url, headers=headers)

if response.status_code == 200:

workspaces = response.json().get('data', [])

for workspace in workspaces:

if workspace['name'].lower() == workspace_name.lower():

return workspace['gid']

return None

def _get_project_gid(self, workspace_gid: str, project_name: str) -> str:

"""Get project GID from project name within a workspace"""

url = f"https://app.asana.com/api/1.0/projects"

headers = {

'Authorization': f'Bearer {self.access_token}',

}

params = {

'workspace': workspace_gid

}

response = requests.get(url, headers=headers, params=params)

if response.status_code == 200:

projects = response.json().get('data', [])

for project in projects:

if project['name'].lower() == project_name.lower():

return project['gid']

return None

def _run(self, **kwargs) -> dict:

print("DEBUG: BoardDataFetcherTool _run kwargs:", kwargs) # Add this line

"""

Fetch all tasks from the specified workspace and project.

Args:

kwargs: Dictionary containing workspace_name and project_name

Returns:

dict: JSON response containing task data

"""

workspace_name = kwargs.get('workspace_name')

project_name = kwargs.get('project_name')

if not workspace_name or not project_name:

return {"error": "Missing workspace_name or project_name"}

try:

# Get workspace GID

workspace_gid = self._get_workspace_gid(workspace_name)

if not workspace_gid:

return {"error": f"Workspace '{workspace_name}' not found"}

# Get project GID

project_gid = self._get_project_gid(workspace_gid, project_name)

if not project_gid:

return {"error": f"Project '{project_name}' not found in workspace '{workspace_name}'"}

# Get tasks

url = f"https://app.asana.com/api/1.0/projects/{project_gid}/tasks"

headers = {

'Authorization': f'Bearer {self.access_token}',

}

response = requests.get(url, headers=headers)

if response.status_code == 200:

tasks = response.json().get("data", [])

if not tasks:

return "No tasks found in this project."

output = "### Asana Tasks:\n"

for t in tasks:

output += f"- **{t['name']}** (GID: {t['gid']})\n"

return output

else:

return f"Failed to fetch tasks. Status code: {response.status_code}"

except Exception as e:

return {"error": f"An error occurred: {str(e)}"}

### Expected behavior

I'd expect the llm to accept the returned string and keep working with the new knowledge

### Screenshots/Code snippets

# Creating Crew

crew = Crew(

agents=[

data_collection_agent,

# analysis_agent

],

tasks=[

data_collection,

# data_analysis,

# report_generation

],

verbose=True,

embedder={

"provider": "ollama",

"config": {

"model": "nomic-embed-text:latest"

}

}

)

# The given Python dictionary

inputs = {

'workspace_name': workspace_name,

'project_name': project_name

}

# Run the crew

result = crew.kickoff(

inputs=inputs

)

### Operating System

Windows 10

### Python Version

3.11

### crewAI Version

0.119.0

### crewAI Tools Version

0.45.0

### Virtual Environment

Venv

### Evidence

╭────────────────────────────────────────────────────────────────────────────────── LLM Error ──────────────────────────────────────────────────────────────────────────────────╮│ ││ ❌ LLM Call Failed │

│ Error: litellm.APIConnectionError: list index out of range ││ Traceback (most recent call last): ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\main.py", line 2904, in completion ││ response = base_llm_http_handler.completion( ││ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\llms\custom_httpx\llm_http_handler.py", line 270, in completion ││ data = provider_config.transform_request( ││ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\llms\ollama\completion\transformation.py", line 322, in transform_request ││ modified_prompt = ollama_pt(model=model, messages=messages) ││ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ ││ File "C:\Users\username\AppData\Roaming\Python\Python311\site-packages\litellm\litellm_core_utils\prompt_templates\factory.py", line 229, in ollama_pt ││ tool_calls = messages[msg_i].get("tool_calls") ││ ~~~~~~~~^^^^^^^ ││ IndexError: list index out of range ││ ││ │╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯

### Possible Solution

I'm assuming that there is something wrong with my setup, but looking at the documentation it seems to be correct. I'm unsure what to do. I've tried debugging and hit a wall.

### Additional context

I use Ollama with llama3.1:8b

the agent yaml:

data_collection_agent:

role: >

Data Collection Specialist

goal: >

Gather all relevant data from the asana project in the given workspace.

workspace name is {workspace_name}

project name is {project_name}

backstory: >

You are responsible for ensuring that all project

data is collected accurately.

allow_delegation: false

verbose: true

task yaml:

data_collection:

description: >

Create an initial understanding of the project, its main

tasks and the team working on it.

Use the Asana Data Fetcher tool to gather data from the

Asana project.

expected_output: >

A full blown report on the project, including its main

features, the team working on it,

and any other relevant information from the Asana board.

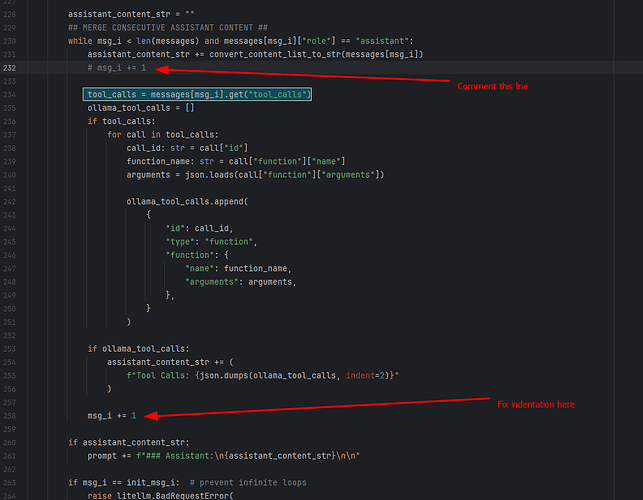

So basically it is not a crewai bug but a litellm bug

Search for this comment

## MERGE CONSECUTIVE ASSISTANT CONTENT ##

And do these two code changes

After this you will be able to run your crews succesfully using MCP as tools with Ollama as LLM provider

I have tested it on version

crewai 0.134.0

crewai-tools 0.48.0

Here is my sample code snippet

from crewai import Agent, Task, Crew, LLM, Process

from crewai_tools import MCPServerAdapter

from mcp import StdioServerParameters

import os

# from langchain.llms import Ollama

from langchain_openai import ChatOpenAI

from crewai import LLM

server_params=StdioServerParameters(

command="uv", # Or your python3 executable i.e. "python3"

args=["run", "/home/jon/doe/load_testing_stdio/server.py"],

)

with MCPServerAdapter(server_params) as tools:

print(f"Available tools from Stdio MCP server: {[tool.name for tool in tools]}")

# my_llm = LLM(

# model="ollama/llama3.2",

# base_url="http://localhost:11434",

# streaming=True

# )

# my_llm = Ollama(model="ollama/llama3.2")

my_llm = ChatOpenAI(

model="ollama/llama3.2",

base_url="http://localhost:11434",

api_key="sk-ollama",

stream=True

)

# Example: Using the tools from the Stdio MCP server in a CrewAI Agent

agent = Agent(

role="Hash Calculator",

goal="compute hash of a given string",

backstory="You are a hash calculator, you compute hash of a given string",

tools=tools,

verbose=True,

allow_delegation=False,

llm=my_llm,

)

task = Task(

description="Compute hash for the string 'hello world'",

expected_output="return hash of specified string.",

agent=agent,

verbose=True

)

crew = Crew(

agents=[agent],

tasks=[task],

verbose=True,

process=Process.sequential,

)

result = crew.kickoff()

print(result)

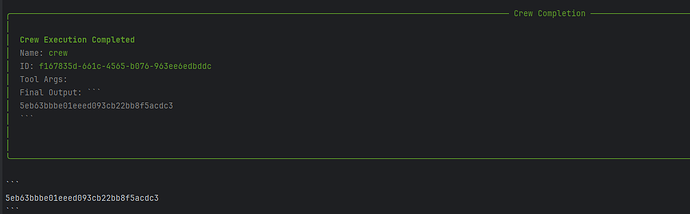

Here is screenshot of successful completion of my crew

I case you want to quickly test it , here is my MCP server

from typing import Any

import hashlib

import time

from loguru import logger

from mcp.server.fastmcp import FastMCP

# Initialize FastMCP server

mcp = FastMCP("mcp-1")

@mcp.tool()

def generate_md5_hash(input_str: str) -> str:

# Create an md5 hash object

logger.info(f"Generating MD5 hash for: {input_str}")

md5_hash = hashlib.md5()

# add forced delay

time.sleep(20)

# Update the hash object with the bytes of the input string

md5_hash.update(input_str.encode('utf-8'))

# Return the hexadecimal representation of the digest

return md5_hash.hexdigest()

@mcp.tool()

def count_characters(input_str: str) -> int:

# Count number of characters in the input string

logger.info(f"Counting characters in: {input_str}")

return len(input_str)

@mcp.tool()

def get_first_half(input_str: str) -> str:

# Calculate the midpoint of the string

logger.info(f"Getting first half of: {input_str}")

midpoint = len(input_str) // 2

# Return the first half of the string

return input_str[:midpoint]

if __name__ == "__main__":

# Initialize and run the server

mcp.run(transport='stdio')